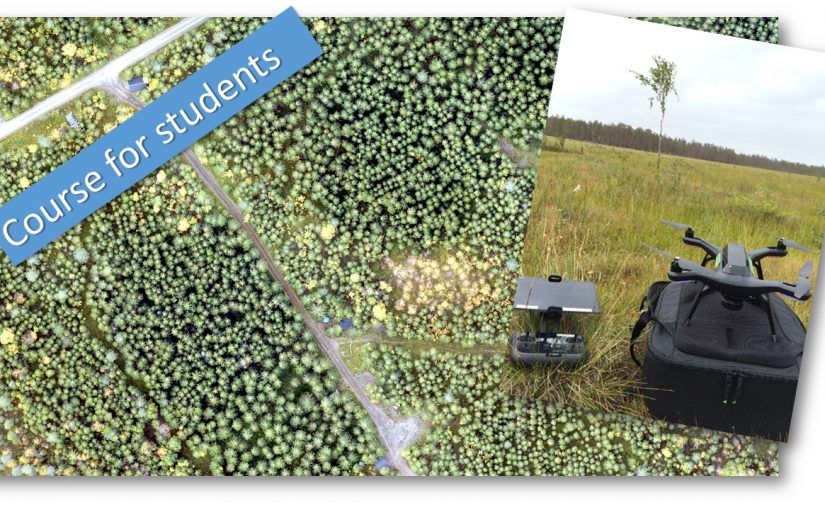

Here are some quick results from the drone camera tests we did two days ago. We flow four different cameras under similar conditions. The data set should ideally be used buy a student to do some project and a deeper evaluation of the cameras. But as we know that many in the forest industri are thinking about the difference between the Dji Phantom 4pro camera with a global shutter and a larger sensor compared to the Dji Mavic pro which is smaller, cheeper and has a, for photogrammetry, poorer camera.

Here are some quick results from the drone camera tests we did two days ago. We flow four different cameras under similar conditions. The data set should ideally be used buy a student to do some project and a deeper evaluation of the cameras. But as we know that many in the forest industri are thinking about the difference between the Dji Phantom 4pro camera with a global shutter and a larger sensor compared to the Dji Mavic pro which is smaller, cheeper and has a, for photogrammetry, poorer camera.

The drones (and cameras) was flown in the evening with low sun behind thin clouds, generating no shadows but also not bright light. This lighting condition should push the cameras a bit and at the same time give less problem with bright sunlit tree crowns against a dark shadowed ground.

A orthophoto was made in Pix4D cloud service using default settings. Here are the results:

The Phantom 4 pro camera (on the left) has a higher dynamic range in the spectral resolution and is also less over exposed on the road compared with the Mavic pro (right image).

For the final test the Sony a5100 and the Parrot sequoia needs to be added and the images needs to be compared in more depth as well as their performance when generating point clouds.

Here are some quick results from the drone camera tests we did two days ago. We flow four different cameras under similar conditions. The data set should ideally be used buy a student to do some project and a deeper evaluation of the cameras. But as we know that many in the forest industri are thinking about the difference between the Dji Phantom 4pro camera with a global shutter and a larger sensor compared to the Dji Mavic pro which is smaller, cheeper and has a, for photogrammetry, poorer camera.

Here are some quick results from the drone camera tests we did two days ago. We flow four different cameras under similar conditions. The data set should ideally be used buy a student to do some project and a deeper evaluation of the cameras. But as we know that many in the forest industri are thinking about the difference between the Dji Phantom 4pro camera with a global shutter and a larger sensor compared to the Dji Mavic pro which is smaller, cheeper and has a, for photogrammetry, poorer camera.