- Field visit RS course: Testing the new GeoSLAM hand laser scanner

In the Remote sensing and forest inventory course we allways has a demo of different sensors and platforms avialble for the students in the Ljungberg laboratory. This year we brought the … Continue reading Field visit RS course: Testing the new GeoSLAM hand laser scanner

In the Remote sensing and forest inventory course we allways has a demo of different sensors and platforms avialble for the students in the Ljungberg laboratory. This year we brought the … Continue reading Field visit RS course: Testing the new GeoSLAM hand laser scanner - SLU internal seminar on new regulations for flying drones

From the 1st of July there will be new regulations for operating drones in the air space. The regulations will be the same within EU. In order to inform the drone … Continue reading SLU internal seminar on new regulations for flying drones

From the 1st of July there will be new regulations for operating drones in the air space. The regulations will be the same within EU. In order to inform the drone … Continue reading SLU internal seminar on new regulations for flying drones - Albedometer drone project ongoing

As part of a Design-Build-Test course, five engineering students are building a albedometer sensor system for the new drone in the Lab. The albedometer will consist of two MS-80U pyranometers from … Continue reading Albedometer drone project ongoing

As part of a Design-Build-Test course, five engineering students are building a albedometer sensor system for the new drone in the Lab. The albedometer will consist of two MS-80U pyranometers from … Continue reading Albedometer drone project ongoing - Students present Master thesis projects at RIU conference

Victor Kingstad och Mårten Tovedal presented their master thesis projects at the RIU conference in Skinnskatteberg, 13-14 November. The conference is an annual event gathering people from forest planing and forest … Continue reading Students present Master thesis projects at RIU conference

Victor Kingstad och Mårten Tovedal presented their master thesis projects at the RIU conference in Skinnskatteberg, 13-14 November. The conference is an annual event gathering people from forest planing and forest … Continue reading Students present Master thesis projects at RIU conference - Point clouds in virtual reality

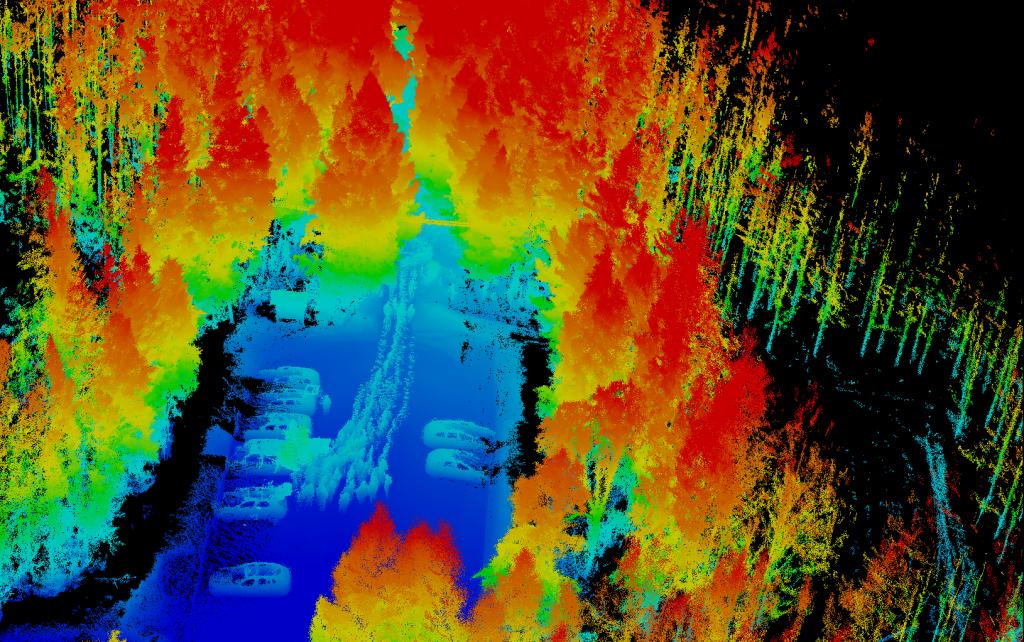

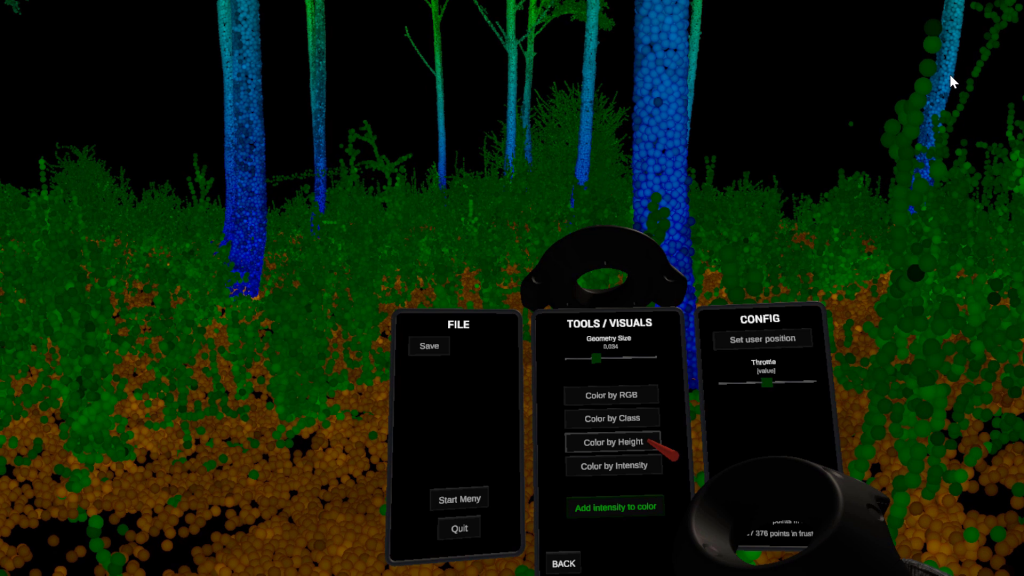

The lab has developed a software to view and analyze point clouds in Virtual Reality. The application is written in Unity and supports only HTC Vive. We have also released the … Continue reading Point clouds in virtual reality

The lab has developed a software to view and analyze point clouds in Virtual Reality. The application is written in Unity and supports only HTC Vive. We have also released the … Continue reading Point clouds in virtual reality