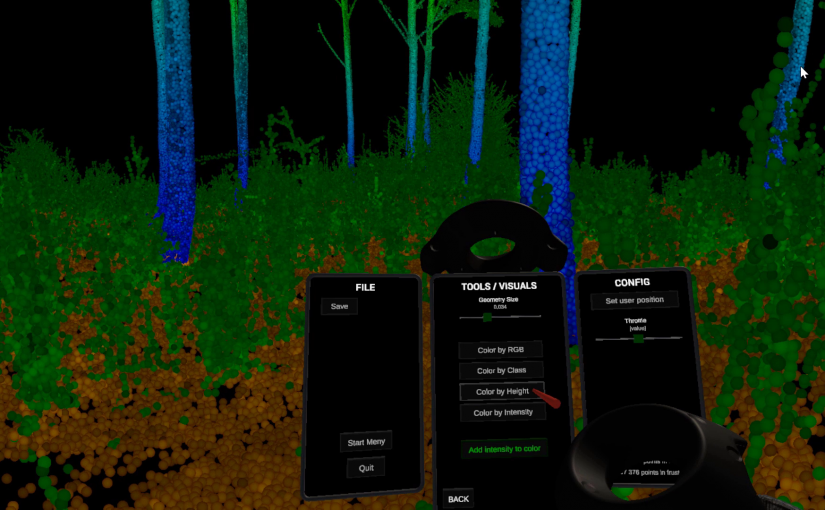

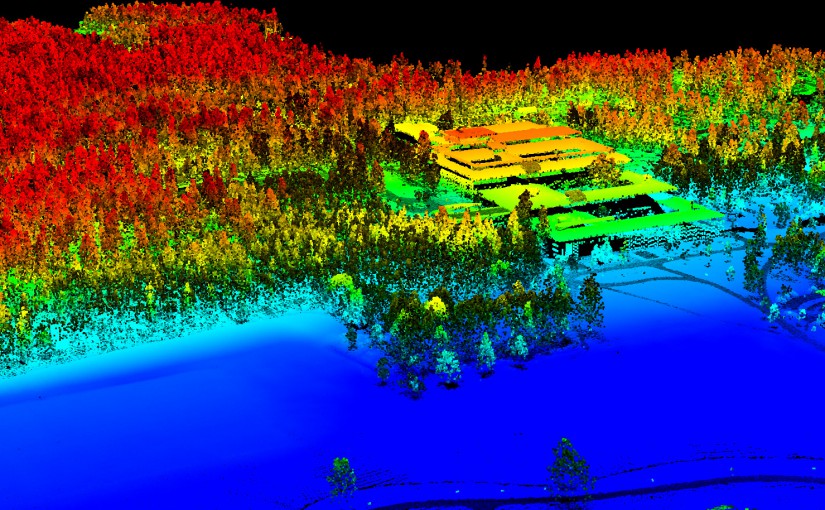

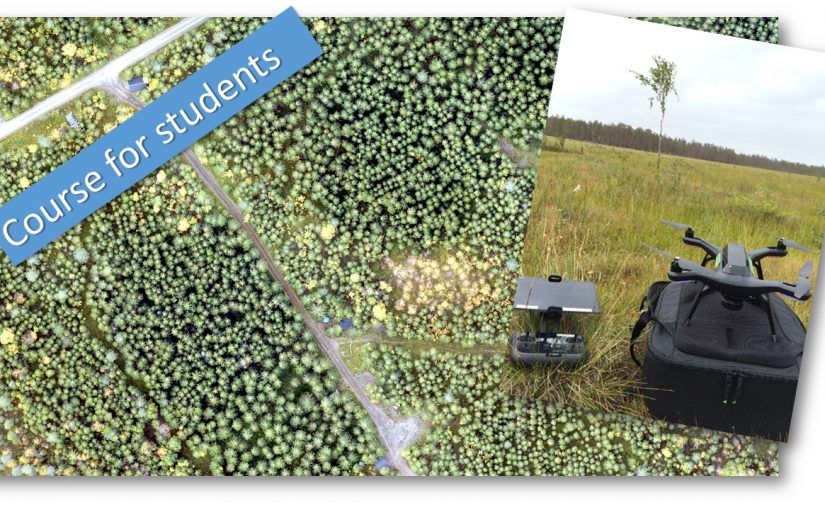

In the Remote sensing and forest inventory course we allways has a demo of different sensors and platforms avialble for the students in the Ljungberg laboratory. This year we brought the new handheld laserscanner from GeoSLAM, in addition to the drones (camera and Lidar) and terrestrial laser scanner.

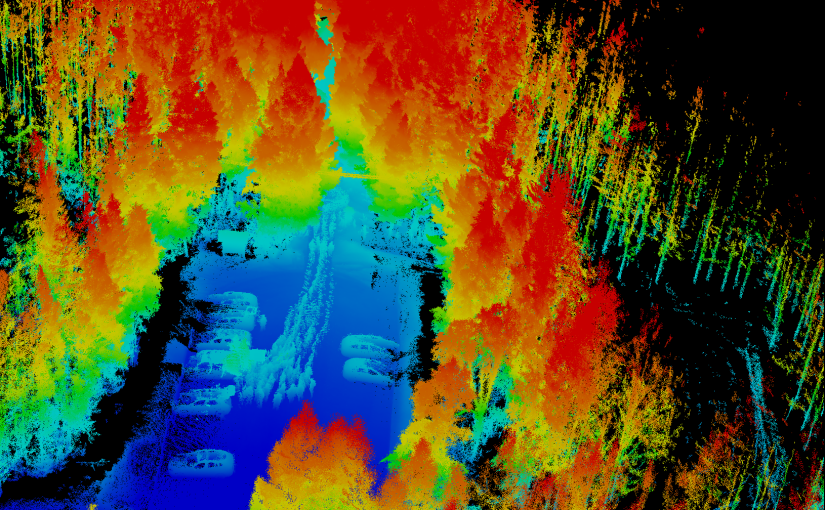

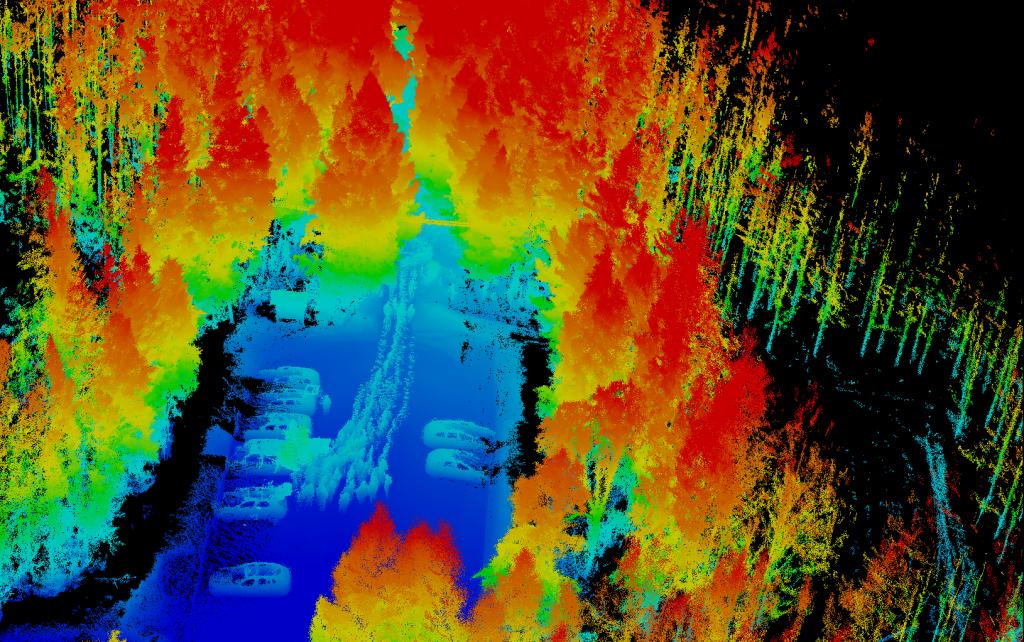

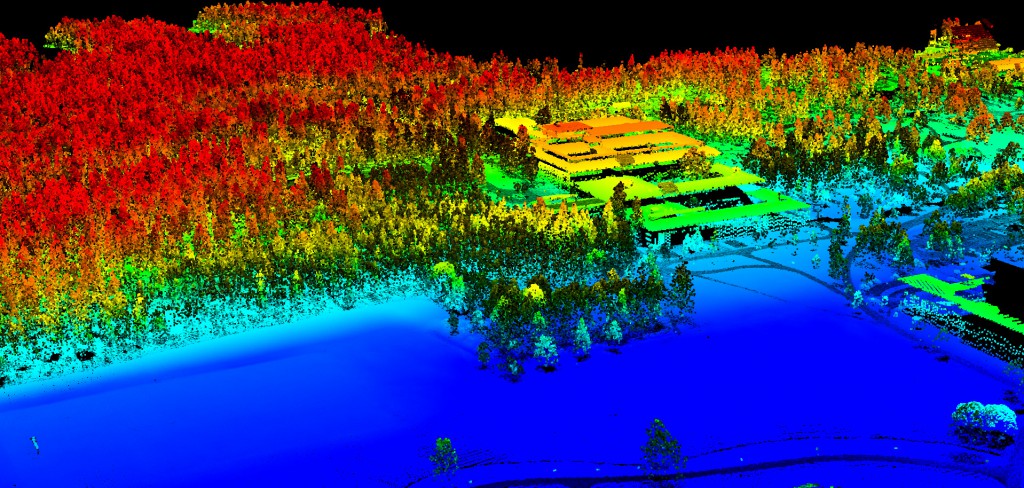

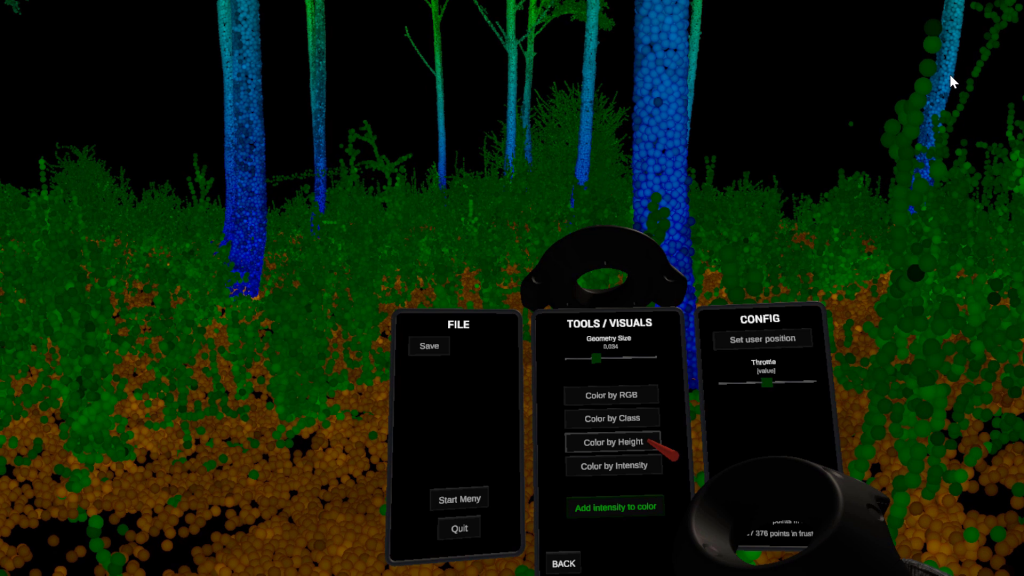

The image is a view of the 3D point cloud generated from the GeoSLAM. The handheld scanner scans everything around as you move thru the forest. Normally the post-processing software removes the person carrying the scanner, but in our case we had 15 students following the scanner, resulting in a line of ghosts in the data.

The demo of the different sensors and platforms are an important part of the course as it sets the frame for what the students can play with in their final project of the course.